Following last week’s leak of proposed new rules about the use of AI systems, The European Commission looks likely to ban some “unacceptable” usage of AI in Europe.

The Leak and the Letter

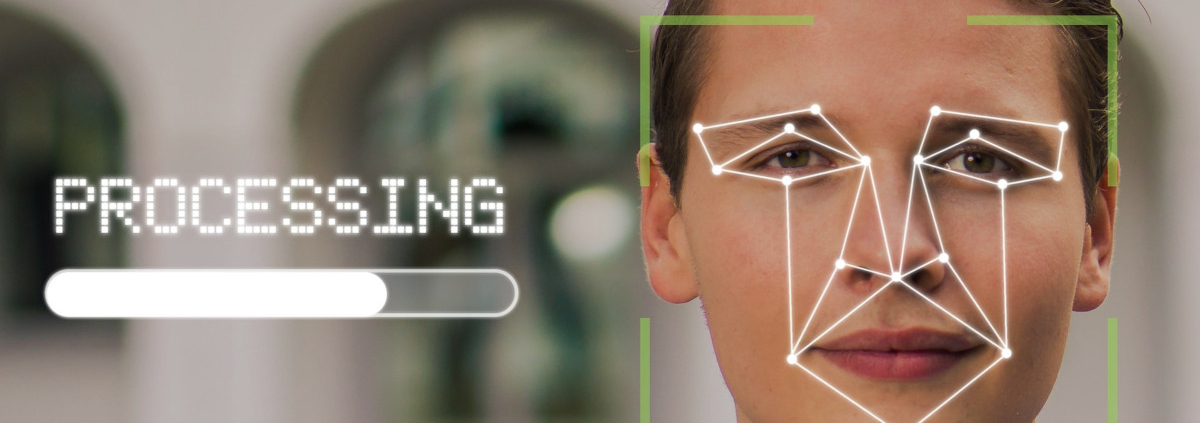

This latest announcement that the European Commission aims to ban “AI systems considered a clear threat to the safety, livelihoods and rights of people” (and thereby “unacceptable”) follows the ‘leak’ last week of the proposed new rules to govern the use of AI (particularly for biometric surveillance) and a letter for 40 MEPs calling for a ban on the use of facial recognition and other types of biometric surveillance in public places.

Latest

This latest round of announcements about the proposed new AI rules by the EC highlights how the rules will follow a risk-based approach, will apply across all EU Member States, and are based on a future-proof definition of AI.

Risk-Based

The European Commission’s new rules will class “unacceptable” risk as “AI systems considered a clear threat to the safety, livelihoods and rights of people”. Examples of unacceptable risks include “AI systems or applications that manipulate human behaviour to circumvent users’ free will (e.g. toys using voice assistance encouraging dangerous behaviour of minors) and systems that allow ‘social scoring’ by governments.”

High Risk – Remote Biometric Identification Systems

According to the new proposed rules, high-risk AI systems include law enforcement, critical infrastructures and migration, asylum, and border control management. The EC says that these (and other high-risk AI systems) will be subject to strict obligations, especially “all remote biometric identification systems” which will only have “narrow exceptions” including searching for a missing child, preventing an imminent terrorist threat, or finding and identifying a perpetrator or suspect of a serious criminal offence.

Other Risk Categories

The other risk categories for citizens covered in the proposed new EC AI rules include limited risk (chatbots), and minimal risk (AI-enabled video games or spam filters).

Governance

Supervision of the new rules looks likely to be the responsibility of whichever market surveillance authority each nation sees as competent enough, and a European Artificial Intelligence Board will be set up to facilitate their implementation and drive the development of standards for AI.

It is understood that the rules will apply both inside and outside the EU if an AI system is available in the EU or if its use affects people who are located in the EU.

What Does This Mean For Your Business?

AI is now being incorporated in so many systems and services across Europe that there is clearly a need for rules and legislation to keep up with technology rollout to protect citizens from its risks and threats. Mass, public biometric surveillance such as facial recognition systems is an obvious area of concern, as highlighted by its monitoring by privacy groups (e.g. Big Brother Watch) and by the recent letter calling for a ban by 40 MEPs. These proposed new rules, however, are designed to cover the many different uses of AI including low and minimal risk uses with the stated intention of making Europe a “global hub for trustworthy Artificial Intelligence (AI)”. If the rules can be enforced successfully, this will not only provide some protection for citizens but will also help businesses and their customers by providing guidance to ensure that any AI-based systems are used in a responsible and compliant way.

If you would like to discuss your technology requirements please:

- Email: hello@gmal.co.uk

- Visit our contact us page

- Or call 020 8778 7759

Back to Tech News